Born from an open call by the University of the Arts London, this project is a response to create an AR wayfinding system for the 2025 graduate degree show at Camberwell College of Arts. The collaborative project paired my 3D modelling and AR development expertise with a graphic designer's visual direction to create an innovative navigational experience that goes beyond traditional signage.

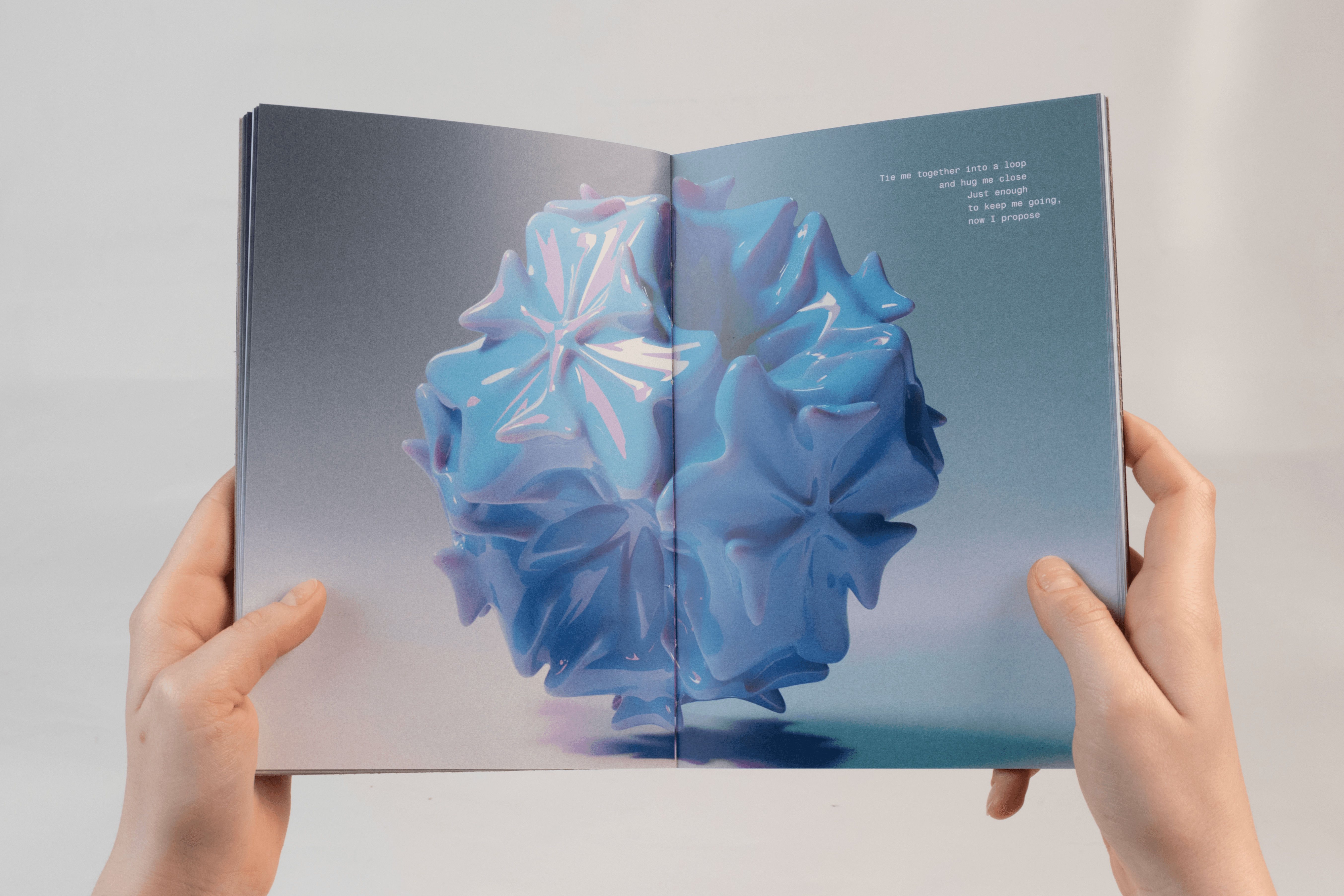

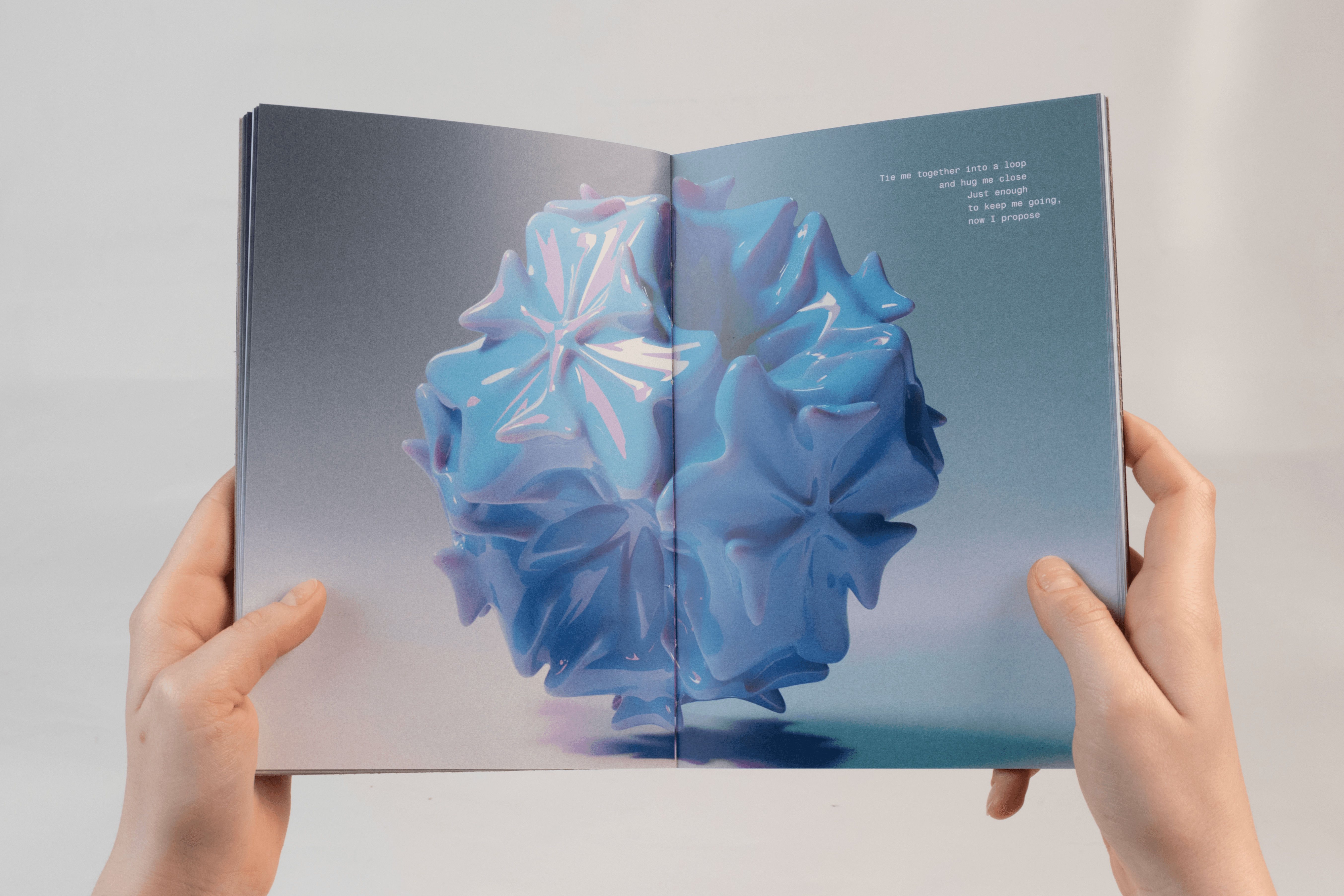

The project's conceptual foundation draws from the tools and techniques used by the students of Camberwell's three design courses; Illustration, Graphic Design, and Interior Spatial Design. From which a series of 3D abstract shapes were developed to form both the visual identity for the physical signage and the interactive elements within the AR experiences.

My role centred on developing the AR web application that could scan QR codes at various signs to trigger location specific AR installations. The goal of these installations was to get the visitors to the collage to take a more active approach to engaging with the art displayed by the students. Rather than simply directing visitors from point to point, the AR experiences were designed to shift perspective, placing visitors in a creative position allowing them to participate in art making.

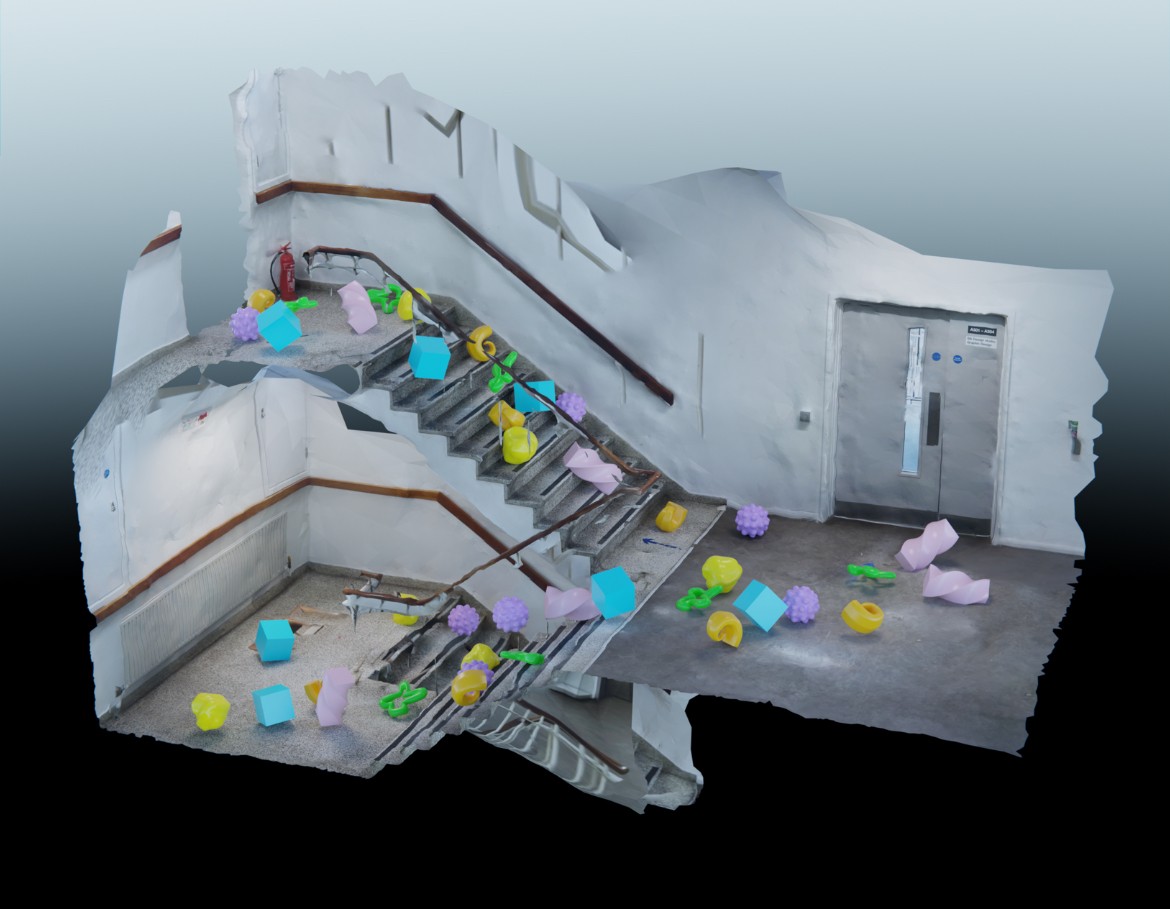

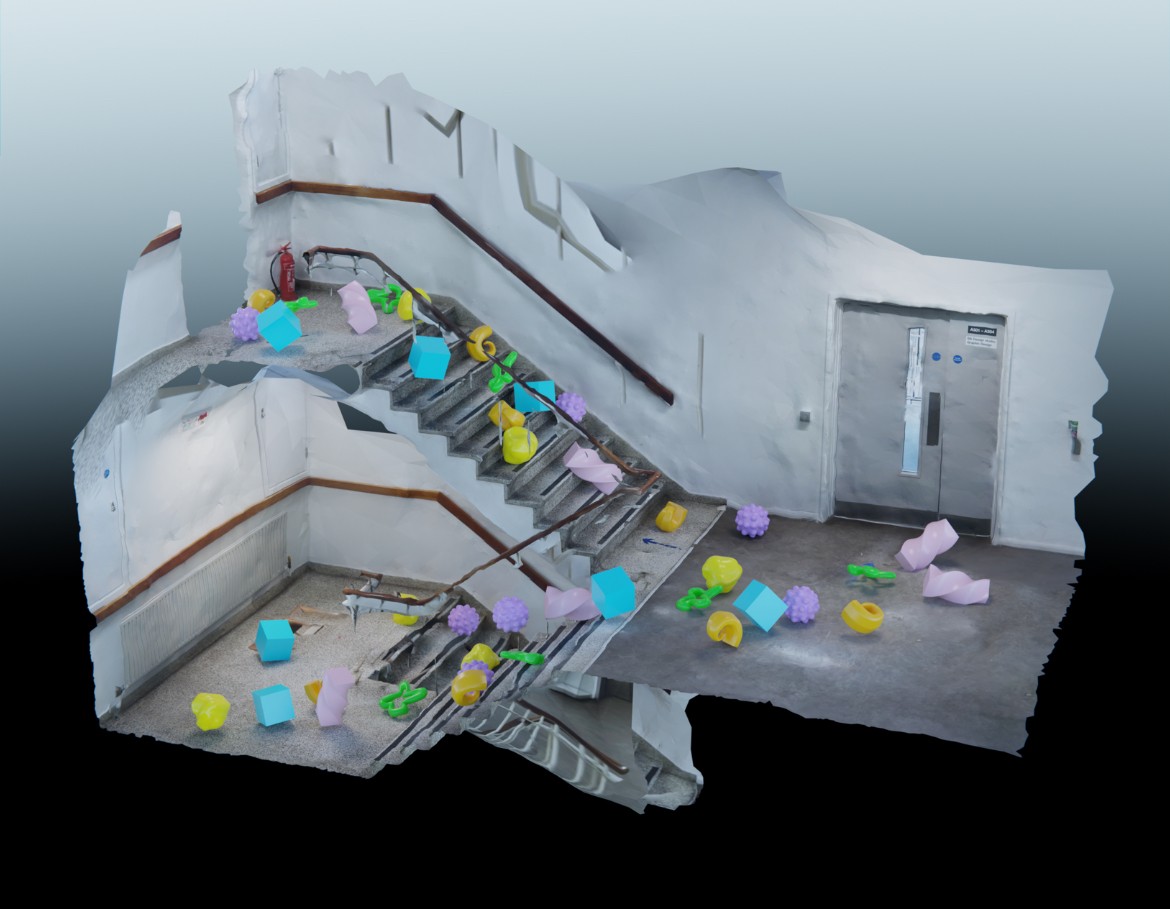

From manipulating building blocks to form abstract scenes in the Interior and Spatial Design block to generating quirky poetry lines by clicking floating shapes in the illustration corridor. Experimenting with these discipline-specific activities, users could momentarily inhabit the creative mindset that defines each course, transforming wayfinding into an immersive introduction to the university's creative culture.

Process

This project began with extensive research into the 3 design pathways at Camberwell Collage of Arts, we conducted various interviews to gage the student’s favourite tools to use in their practice. From there we assigned each course 10 shapes that we designed to capture the essence of what the students relayed to us.

During the prototyping phase we utilised 3D scanning techniques and photogrammetry quite extensively. Capturing the environments we would be working with. I then imported these scans into Blender and simulated the various AR interactions, allowing me to test the experiences before they were even built as well as work remotely.

As my partner developed the 2D aspects of the project, I began to work on the AR installations once the 5 were confirmed. I used Niantic’s 8th wall editor to create and launch the experiences.